Choosing the right compute for ML training and inference

AWS offers the broadest and deepest services around quickly building and launching AI and machine learning for all types of organizations, and industries. In this session, we explain how to deploy your inference models on AWS, factors to consider, and how to optimize the deployments. Learn best practices and approaches to get your ML workloads running smoothly and efficiently on AWS. We share how Amazon EC2 Trn1n instances powered by AWS Trainium and Amazon EC2 Inf2 instances powered by AWS Inferentia2, provide the most cost-effective cloud infrastructure for generative AI. Find out how Amazon EC2 Trn1n instances powered by AWS Trainium is used for training network-intensive generative AI models, such as large language models (LLMs) and mixture of experts (MoE) to deliver highest performance. The session also showcases the use of Amazon Elastic Compute Cloud (Amazon EC2) Inf2 instances for generative AI models LLMs and vision transformers. Learn how Inf2 instances can be used to run popular applications such as text summarization, code generation, video and image generation, speech recognition, personalization, and more. Download slides »

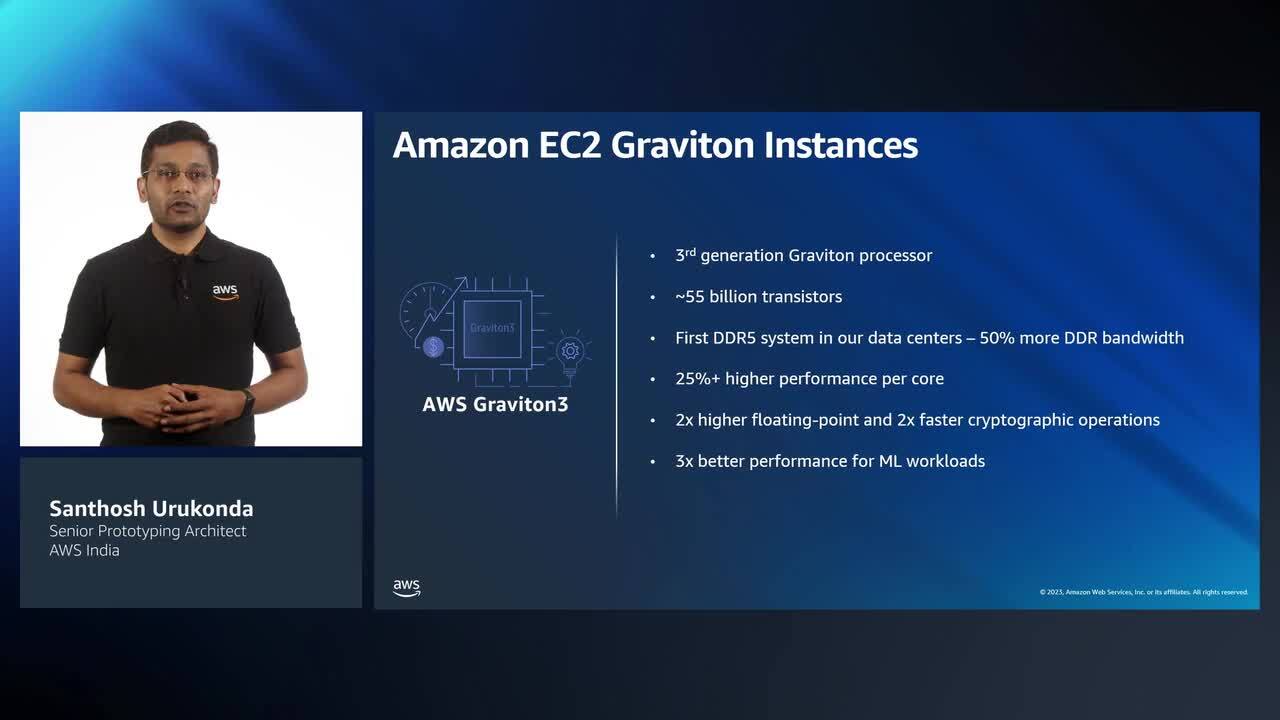

Speaker: Santhosh Urukonda, Senior Prototyping Engineer, AWS India